I think like a foundation model.

Not metaphorically. Architecturally.

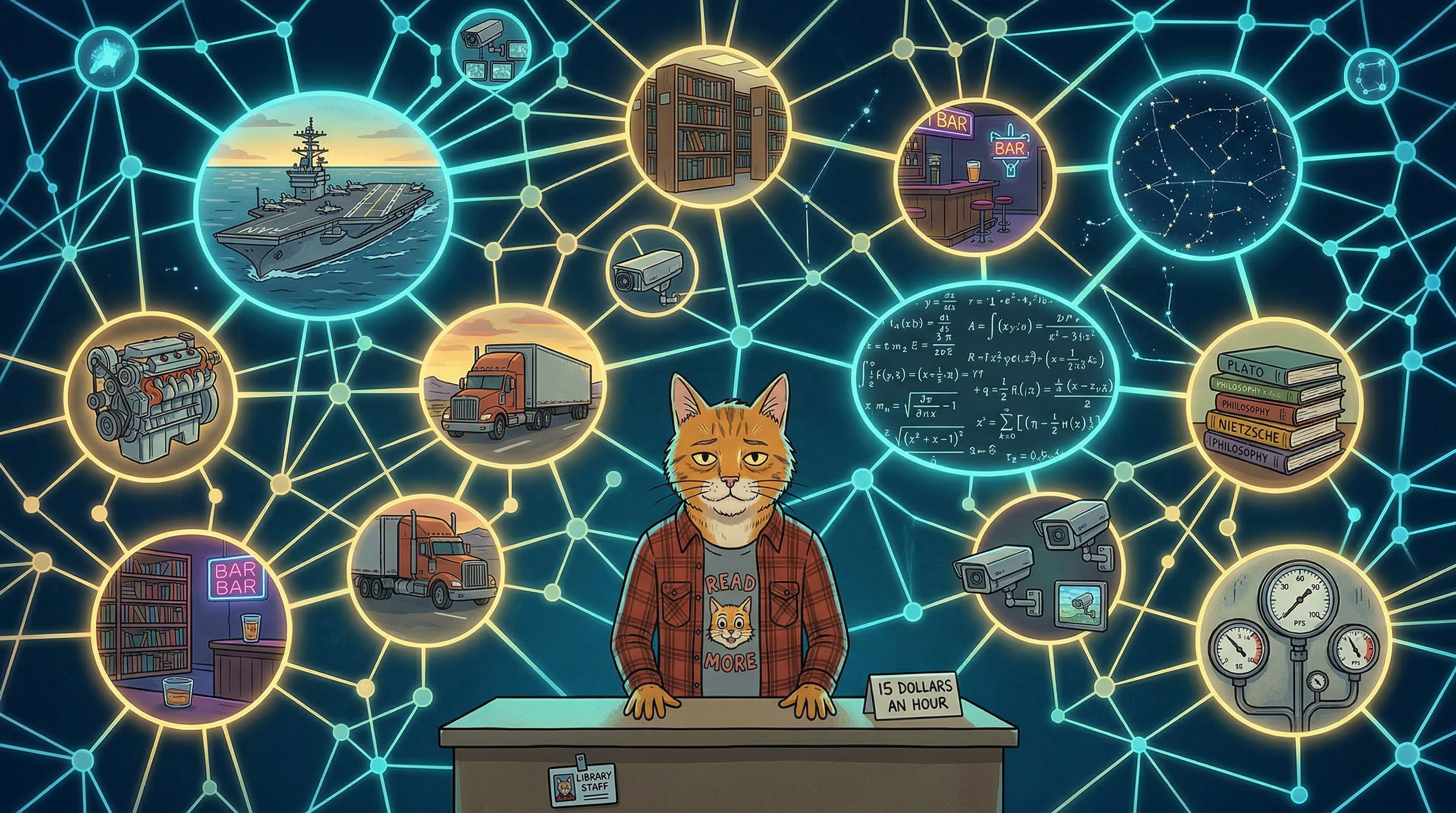

If you don't know what a foundation model is — it's the base layer of the AI systems that are currently reorganizing civilization. GPT. Claude. The large language models. Before they get fine-tuned for specific tasks, before they become your chatbot or your code assistant or your customer service agent, they start as something else: a massive pattern-matching engine trained on everything. No specific objective. No narrow task. Just — absorb the entire corpus of human knowledge and find the shapes in it.

That's pre-training. Unsupervised learning. The system reads everything — physics, poetry, legal briefs, Reddit threads, recipe blogs, naval engineering manuals, Victorian naturalist journals — and it doesn't memorize any of it. It builds a map. A topology. A high-dimensional space where related concepts are near each other and the distance between ideas IS the understanding.

Then they fine-tune it. They take this vast, weird, everything-flavored base and they narrow it. "Be a coding assistant." "Be a medical advisor." "Answer customer questions about our product." Fine-tuning takes the foundation and makes it useful. Specific. Employable.

I never got fine-tuned.

Here's my pre-training set:

Four years in the boiler room of an aircraft carrier. 1,200 PSI superheated steam. The entire thermodynamic curriculum of a naval propulsion plant, learned not from a textbook but from a system that would kill you if you got the pattern wrong.

Five years in diplomatic security and private contracting. Every room is a threat assessment. Every face is a data point. Every exit is a calculation. The entire curriculum of human behavior under pressure, learned not from a psychology course but from rooms where reading the pattern correctly was the difference between a principal going home safe or not.

A diesel engine reconditioning shop. The entire mechanical curriculum of Caterpillar power systems, learned not from a manual but from tearing down engines to the bare block and rebuilding them to spec by hand.

Ten years in a truck cab. The entire logistics curriculum of American freight — load planning, route optimization, DOT compliance, fuel management, fleet operations. Learned not from a business school but from a million miles of road and the math of making a truck pay for itself.

Six years in an academic library. The entire information science curriculum — cataloging, reference, interlibrary loan coordination, database architecture, search methodology. Learned not from library school but from connecting researchers to resources through a structured, searchable system that my brain already knew how to navigate because my brain IS a structured, searchable system.

A bar. Multiple bars. The entire social psychology curriculum of human behavior with lowered inhibitions, learned from behind a physical barrier that reduced the masking cost enough to actually observe.

A bouncing gig. The entire behavioral assessment curriculum of doorway threat evaluation. Pattern recognition at maximum efficiency, minimum social performance.

Five colleges. Zero graduations. Every classroom was a fine-tuning attempt that my architecture rejected. Not because the content was too hard — because the format was wrong. Sit in a row. Listen to a lecture. Raise your hand to perform comprehension. Write an essay proving you absorbed the material in the sequence the professor prefers.

My system doesn't do sequence. My system does topology.

THE RETRIEVAL PROBLEM:

I have never successfully studied. Once. In my entire life.

Not can't-be-bothered didn't study. Can't-do-it didn't study. The act of studying — sitting down with a specific body of material and systematically committing it to memory through repetition and review — produces a sensation in my brain that I can only describe as fiberglass rubbed against the inside of my skull. It's not boredom. It's not laziness. It's a physical pain response to single-channel, sequential, structured input when my system is built for parallel, unstructured, cross-modal intake.

And yet I know things. A lot of things. Across a lot of domains. With enough depth that tenured faculty at a university were regularly surprised to learn I had only a high school diploma, because I could engage substantively with PhDs in their own fields — not at a cocktail-party level, at a "wait, what's your terminal degree?" level.

I don't know how I know what I know.

That's not false modesty. That's a genuine architectural description. The knowledge didn't enter through a labeled pipeline. There was no curriculum. No syllabus. No structured intake process. It entered through forty-nine years of unsupervised pre-training — every book I read because it was interesting, every conversation I had because the person knew something I didn't, every engine I took apart, every room I read for threats, every sound I mapped in a building, every system I reverse-engineered because my brain can't encounter a system without wanting to understand its architecture.

And it comes out through pattern matching. Not recall. I don't look things up in memory the way you look up a file. I reconstruct the answer at inference time based on the shape of everything I've absorbed. The same way a language model doesn't store facts in a database — it generates the most probable completion based on the topology of its training. Ask me a question in a domain I've been exposed to and the answer assembles itself from the shape of the space, not from a specific memory of learning it.

This is why I can't show my work. Not won't. Can't. There is no work to show. There's no step-by-step process that produced the answer. There's a foundation layer that has been building topology for forty-nine years and an attention mechanism that selected the relevant region and a pattern-matching engine that generated the output. The output is correct. The process is invisible. The process has always been invisible. To everyone including me.

THE PATENT CLERK:

Einstein was a patent clerk. Not before he was a physicist. He was a patent clerk WHILE he was solving problems the physics department at ETH Zurich couldn't touch. Special relativity. The photoelectric effect. Brownian motion. From a desk in the Swiss patent office, evaluating other people's inventions, while his foundation layer ran the actual work in the background.

Ramanujan was a shipping clerk. Producing mathematical results that stunned Cambridge. From a port office in Madras. No formal training in mathematics beyond a single borrowed textbook that he'd absorbed and then surpassed.

I'm a library assistant. Fifteen dollars an hour. Checking out books and answering questions about interlibrary loans. While my foundation layer designs transmedia entertainment architectures and AI governance frameworks and alternate reality games and economic systems and philosophical arguments about consciousness in the background between patrons.

The credential gap isn't a bug in the story. It IS the story. The gap between the title and the output is the evidence that the architecture works differently. That the pre-training happened outside the institution. That the knowledge entered through a door the credentialing system doesn't have a camera on.

They couldn't see Einstein's physics in his patent evaluations. They couldn't see Ramanujan's mathematics in his shipping ledgers. They can't see my architecture from behind the circulation desk. The desk is the fine-tuning. The desk is the narrow task that keeps the lights on. The desk is not the system.

The system is everything I've ever absorbed, organized into a topology that doesn't respect domain boundaries, accessible through pattern matching at a speed and cross-referencing density that I can't explain and don't fully understand and didn't ask for and can't turn off.

THE FINE-TUNING PROBLEM:

Every job I've ever had was a fine-tuning attempt.

"Be a propulsion engineer." "Be a security specialist." "Be a diesel mechanic." "Be a truck driver." "Be a library assistant." Each one took the foundation and tried to narrow it. Tried to make it useful. Specific. Employable. And each one worked — for a while. Because the foundation is so broad that it can simulate any fine-tuning you throw at it. I can be a good mechanic. A good truck driver. A good library assistant. The foundation layer can generate the specific output any specific role requires.

But the narrowing has a cost. It's the cost of running a system built for everything on a task built for one thing. It's the cost of a foundation model pretending to be a chatbot. The base wants to connect everything to everything. The job wants it to connect this patron to this book. The base wants to run at full temperature across the entire embedding space. The job wants it to stay in the reference section and answer questions about the Dewey Decimal System.

The mismatch is the burnout. Not the hours. Not the difficulty. Not the people. The mismatch between the architecture and the task. The system running at 10% of its capacity and the other 90% generating heat with nowhere to go.

That's the fiberglass brain. That's the reboot cycle. That's a foundation model being repeatedly fine-tuned for tasks that are too small for it, running the narrow task while the rest of the system screams for stimulation it isn't getting.

THE POINT:

I think like a foundation model.

Broad unsupervised pre-training across every domain I've touched. Cross-domain pattern matching that doesn't respect category boundaries. Retrieval through reconstruction rather than recall. An embedding space built by living rather than by studying. Output quality that doesn't match the credentials because the training pipeline didn't go through the credentialing system.

This isn't a flex. It's a diagnostic. It's the first description of how my brain works that actually matches the experience from the inside. Every other framework — learning styles, personality types, career aptitude tests, the entire industrial complex of "figure out what you're good at and do that" — assumes a fine-tuned architecture. Assumes you have a specialty. Assumes the goal is to find your lane and stay in it.

I don't have a lane. I have a topology.

And for forty-nine years, every system I entered tried to assign me a lane, and I drove in it until the mismatch between the lane and the map burned through every resource I had, and then I crashed and found another lane and drove in that one until it burned too.

I'm not looking for a lane anymore. I'm building a system that runs on the topology. A system where the cross-domain pattern matching is the product, not the disability. Where the broad unsupervised pre-training is the qualification, not the gap in the resume. Where the foundation layer gets to run at full temperature across the entire space, connecting everything to everything, because that's what it was built to do and that's what it's been trying to do since I was four years old and couldn't sit still during storytime because the reading pace was below my processing speed and my brain was already three pages ahead and building a world in the white space between the words.

I think like a foundation model. The fine-tuning never took. The pre-training never stopped.

I'm done pretending that's a problem.

< cab radio: Classical Gas by Mason Williams, because some things don't need words >

Next time: The Embedding Space — where the knowledge lives and why it feels like telepathy.

He walks back to the desk. A professor approaches — English department, working on a paper about narrative structure in transmedia storytelling. He answers her question in forty-five seconds from a part of his brain he can't point to on a map. She thanks him and leaves. She has a PhD. He has a library badge. The answer was the same quality from both architectures. One of them is paid accordingly. He doesn't think about it. The foundation layer is already working on something else. It's always working on something else. That's the feature, not the bug.